To Study and Implement Single Layer Perceptron for Binary Classification

Introduction

The perceptron is one of the simplest artificial neural network architectures, introduced by Frank Rosenblatt in 1957. It represents the simplest type of feedforward neural network, consisting of a single layer of input nodes that are fully connected to a layer of output nodes. The perceptron can learn linearly separable patterns and uses slightly different types of artificial neurons known as threshold logic units (TLU). The TLU was first introduced by McCulloch and Walter Pitts in the 1940s. A perceptron has a single layer of threshold logic units with each TLU connected to all inputs.

Types of perceptron :

- Single-Layer Perceptron : The single-layer perceptron (SLP) is a fundamental concept in artificial neural networks and serves as the building block for more complex models. In practical terms, the single-layer perceptron is a binary classifier that can be used to separate data points into two classes based on a linear decision boundary.

- Multi-Layer Perceptron : Multilayer perceptron possess enhanced processing capabilities as they consist of two or more layers, adept at handling more complex patterns and relationships within the data.

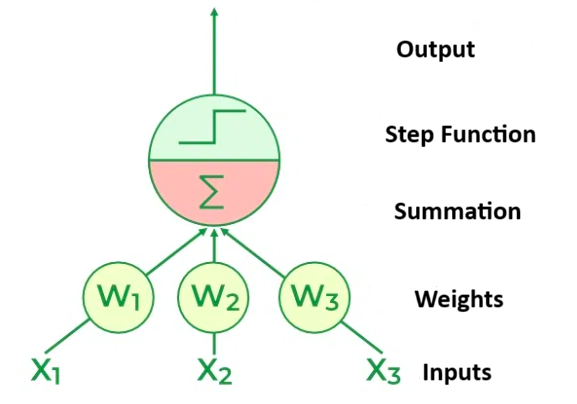

Basic components of perceptron:

- Input layer : The input layer is where the perceptron receives its input features. Each input is associated with a weight, reflecting its importance in the decision-making process.

- Weights : Each input is multiplied by its corresponding weight. These weights determine the strength of the connection between the input and the perceptron.

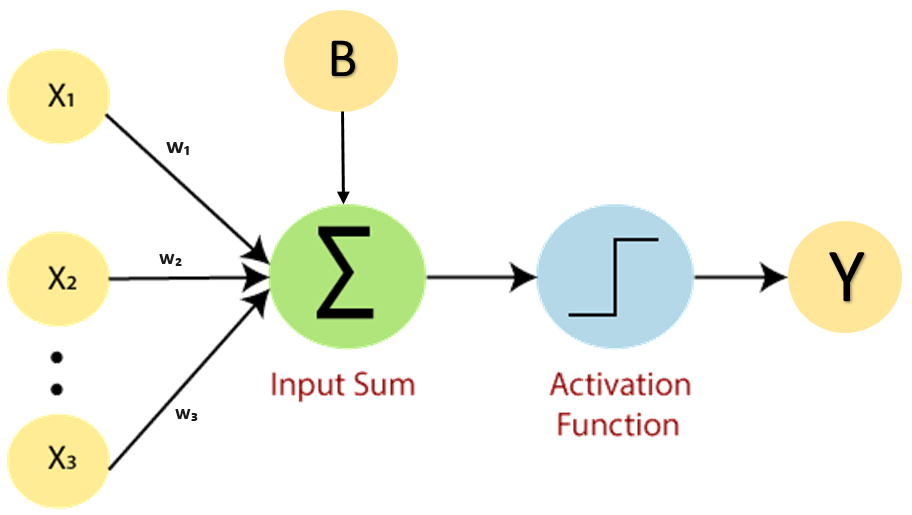

Bias : It is the same as the intercept added in a linear equation. It is an additional parameter which task is to modify the output along with the weighted sum of the input to the other neuron.

Summation : The weighted sum of all inputs is computed. Mathematically, this is represented as:

Σ = w1 . x1 + w2 . x2 + ....+ wn . xn

where,

w is the feature (weight) vector,

x is an n-dimensional sample from the training dataset.Activation Function : The weighted sum is then passed through an activation function. Commonly, a threshold function is used. The Formula for Step Activation Function is :

Yin = Σ + Bias

f(y) = 1, if Yin >= θ

f(y) = -1, if Yin < θ

where, θ is the threshold value.

- Output : The output from the activation function is the final output of the perceptron. In a single-layer perceptron, this output is the result of the classification or decision-making process.

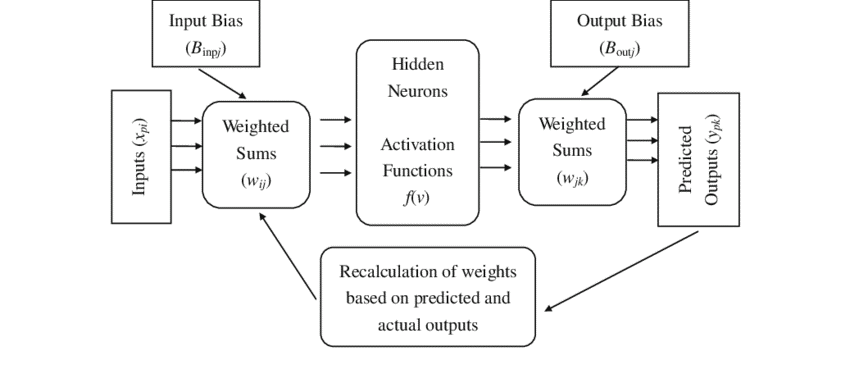

Training Algorithm:

- The training algorithm for the single-layer perceptron is based on the concept of supervised learning.

- The perceptron algorithm adjusts the weights iteratively to minimize the error between the predicted output and the actual output.

- The algorithm updates the weights using the delta rule, which involves calculating the difference between the predicted and actual outputs and adjusting the weights accordingly.

Fig. 3 Flow Chart of Single Layer Perceptron

Perceptron Learning Rule:

- The perceptron learning rule updates the weights based on the error signal.

- If the predicted output matches the target output, no weight adjustment is made.

- If the predicted output differs from the target output, the weights are adjusted to reduce the error.

- The learning rate, which determines the step size of weight updates, is an important parameter that affects the convergence and stability of the model.

- Equation for perceptron weight adjustment :

Δw = η * d * x

where,

d : Predicted Output – Desired Output,

η : Learning Rate, Usually Less than 1,

x : Input Data.

Applications:

- Binary Classification : SLPs find application in tasks requiring binary classification, such as spam detection, medical diagnosis, and quality control.

- Logical Operations : SLPs can be used to implement simple logical operations such as AND, OR, and NOT. For example, a perceptron can be trained to perform an AND operation by classifying input pairs as either belonging to the class "1" (true) or "0" (false) based on the logical AND condition.

- Fault Detection in Systems : SLPs can be applied to detect faults or anomalies in systems, where the goal is to classify normal and abnormal conditions based on input data.

Limitations:

- Linear Separability : One significant limitation is that SLPs can only learn linearly separable functions, meaning they struggle with problems that require non-linear decision boundaries.

- Exclusive OR (XOR) Problem : SLPs fail to solve problems like the XOR function, which demands a non-linear separation of input classes.

Extensions:

- To address limitations, multilayer perceptron have been developed, allowing for the modeling of complex relationships through the incorporation of hidden layers between input and output layers.