Music Signal synthesis

Audio signals generated by many musical instruments can be approximately expressed as combination of multiple sinusoids of appropriate frequencies and amplitudes. Thus, musical notes can be synthesized by combining a bunch of sinusoids. In this experiment we will digitally synthesize signals and listen to them. An example of a musical note is the sound generated by pressing a key of the piano.

Pure tone signal

A single frequency continuous-time sinusoid of frequency is given by

Since a computer can only generate sampled signals, let us fix a sampling rate of samples per second. A digital recording device samples an audio signal at a certain sampling rate (given by the device specifications). In a similar manner, a digital playback device converts the sampled audio signal into a continuous-time signal following the specified sampling rate. The choice of sampling rate depends on the largest frequency content of the signal and is given by the Nyquist sampling criterion. Specifically, to sample a signal with highest frequency of , the sampling rate should be chosen as . This will allow for exact reconstruction of the signal without any loss of information. The sampled signal is given by

where is the sampling interval. A signal of only one frequency is called a pure tone signal. We will synthesise a single tone signal and listen to its sound for various choices of and .

Aliasing

Aliasing is a peculiar phenomenon, happens when the sampling rate does not obey Nyquist sampling criteria. Due to lack of sufficient samples, the high frequencies show up as lower frequencies. For example, let Hz and let a pure tone of frequency Hz is to be synthesised. On applying the above formula, we observe that,

Thus, a signal of frequency Hz is not distinguishable from a signal of frequency Hz. To avoid aliasing, given a sampling frequency of , frequencies less than should only be synthesized.

Envelope

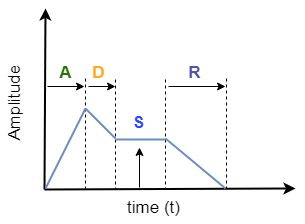

Most audio signals from musical instrument will have a characteristic amplitude envelope corresponding to gradual rise and fall of the volume at beginning and end of a note. Thus, while artificially synthesizing music signals, an envelope is applied. This makes the audio signal sound natural. One such example is the attack-decay-sustain-release (ADSR) envelope. An example figure is shown below:

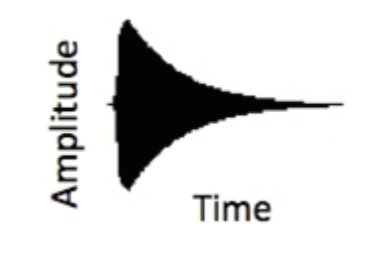

Another example is the exponential decay envelop shown in figure below:

Harmonics

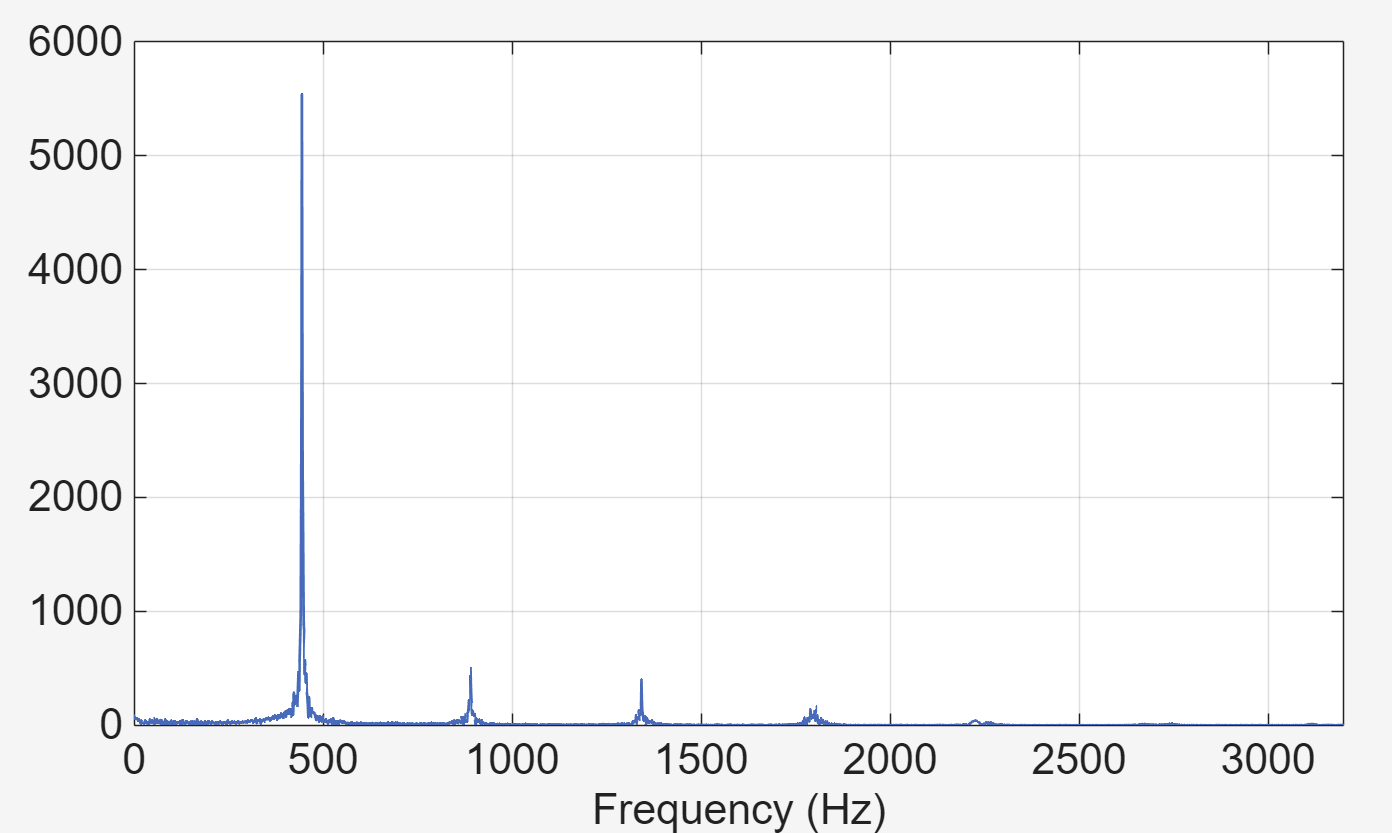

Sinusoidal signals with frequencies , , etc. are called harmonics of the frequency , i.e., their frequency is an integer multiple of the base frequency . In a typical musical note, multiple harmonics are present in varying proportions. For example, the Fourier transform of the signal played by a key (A minor) of a piano is plotted below:

On performing Fourier analysis, the presence of fundamental frequency Hz and their harmonics can be seen. A piano note can be synthetically generated by combining all these harmonics (with appropriate weights) and applying an amplitude envelope. The time series representation of a piano signal is given by

where represents an amplitude envelope.

Musical tune

A tune consists of a sequence of musical notes in succession. Each note consists of the fundamental frequency and its harmonics in a specified proportion (depending on the musical instrument). Each note can be of different duration within the tune. Using sinusoidal building blocks with appropriate amplitude envelopes, we will synthesize musical tunes in this experiment.