Linear Regression

Linear Regression is one of the most fundamental and widely used algorithms in Machine Learning and Statistical Modelling. It belongs to the category of supervised learning algorithms, where the model is trained using labelled data that contains both input variables (features) and the corresponding output variable (target).

The goal of Linear Regression is to identify and quantify the relationship between variables so that future outcomes can be predicted. The algorithm assumes that the relationship between the dependent variable and independent variables can be approximated using a linear equation.

For example, linear regression can be used to model relationships such as House price prediction based on area and number of rooms, Sales prediction based on advertising expenditure, Student performance prediction based on study hours, and Once the model learns the relationship from historical data, it can be used to predict continuous numerical values for new input data.

1. Simple Linear Regression

Simple Linear Regression models the relationship between one independent variable (X) and one dependent variable (Y). The objective is to find the best-fitting straight line, known as the regression line, that represents the relationship between the two variables.

The regression line is determined using the equation:

This line represents the predicted value of Y for a given value of X.

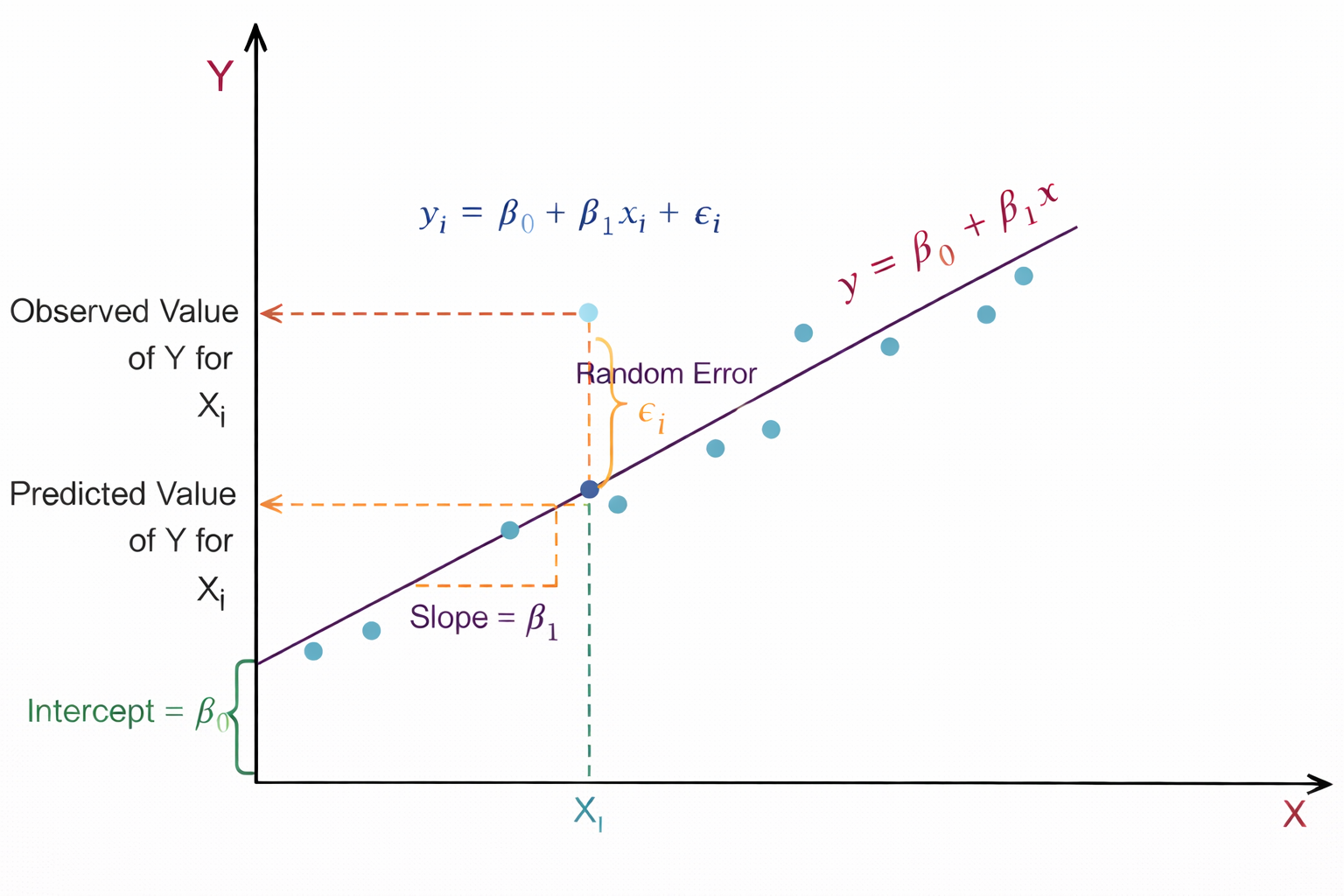

Figure 1: Linear regression showing slope, intercept, and prediction error.

As shown in Figure 1, the relationship between the independent variable X and the dependent variable Y is represented using a linear regression model. The straight line y = β0 + β1x represents the regression line, which predicts the value of Y for a given value of X. Here, β0 is the intercept, which indicates the value of Y when X = 0, and β1 represents the slope of the line, showing how much Y changes for a unit change in X. The light blue points in the graph represent the actual observed data points. For a particular value Xi, the model predicts a value of Y on the regression line, known as the predicted value, while the actual data point represents the observed value. The vertical distance between these two values is called the random error or residual εi.

This residual shows the difference between the predicted value given by the model and the actual observed value. The dashed lines in the graph help visualize the observed value, predicted value, and the slope of the regression line, illustrating how linear regression fits a line that best represents the overall trend of the data.

2. Multiple Linear Regression

Multiple Linear Regression extends the concept of simple linear regression by considering two or more independent variables to predict the dependent variable. This allows the model to capture more complex relationships in the data where multiple factors influence the outcome.

The general equation of multiple linear regression is:

Each coefficient represents the contribution of a specific feature to the predicted value, while keeping other variables constant.

where,

- Y: Dependent variable (target)

- X1, X2, ..., Xn: Independent variables (features)

- β0: Intercept

- β1, β2, ..., βn: Regression coefficients

- ε: Error term (residual)

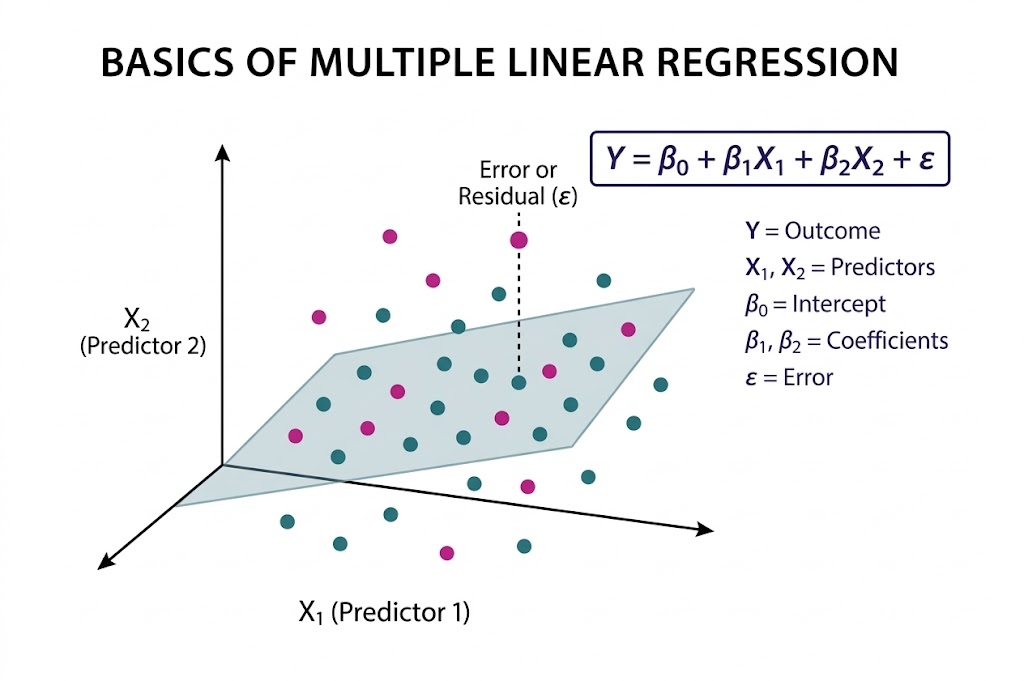

Figure 2: Multiple linear regression showing the regression plane and residual error.

As illustrated in Figure 2, Multiple Linear Regression is a statistical technique used to model the relationship between two or more independent variables and a single dependent variable by fitting a best-fit plane through a three-dimensional scatter plot of data points. The model is expressed as Y = β0 + β1X1 + β2X2 + ε, where Y represents the dependent variable, X1 and X2 are the predictors, β0 is the intercept, β1 and β2 are the coefficients corresponding to each predictor, and ε denotes the error term.

The fitted plane represents the predicted values of the dependent variable; however, the actual data points generally do not lie exactly on this plane. The vertical distance between an observed data point and the plane, indicated by the dashed line, is known as the residual or error. The objective of the model is to minimize these residuals, thereby improving prediction accuracy. This approach enables the model to quantify the contribution of each independent variable and helps in understanding how different predictors influence the outcome.

3. Method of Least Squares

The parameters of the regression model (β₀ and β₁) are estimated using the Least Squares Method. The main idea of this method is to determine the regression line that minimizes the total prediction error.

The error is measured using the Residual Sum of Squares (RSS), which is calculated as the sum of squared differences between the observed values and the predicted values.

where,

- Yi: actual value

- ŷi: predicted value

By minimizing RSS, the algorithm finds the best possible line that fits the data points.

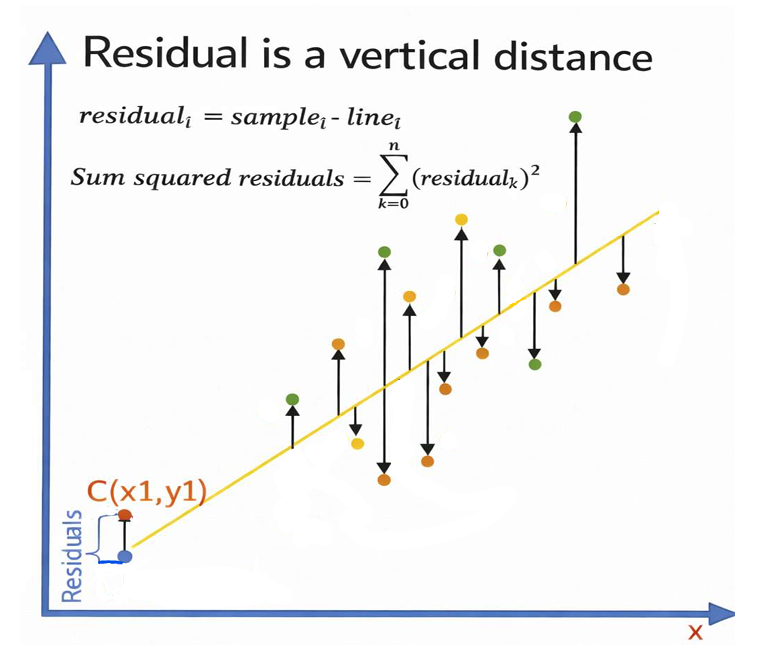

Figure 3: Residuals as vertical distances between observed points and the regression line.

Figure 3 illustrates the concept of residuals in linear regression. The X-axis represents the independent variable x, while the Y-axis represents the dependent variable y. The slanted line shown in the figure is the regression line, which represents the predicted relationship between x and y. The scattered colored points represent the actual observed data points. For each data point, a vertical arrow is drawn between the observed value and the regression line. This vertical distance is called the residual, which represents the difference between the actual observed value and the predicted value given by the regression model. Mathematically, the residual is expressed as residuali = samplei − linei. The figure also shows the formula for the Sum of Squared Residuals (SSR), ∑(residualk)2, which is used in linear regression to measure how well the regression line fits the data. A smaller sum of squared residuals indicates a better fit of the model to the observed data. The point labeled C(x1, y1) demonstrates a specific example where the vertical difference between the observed point and the predicted point on the regression line represents the residual.

4. Interpretation of Regression Coefficients

The coefficients in a regression model provide meaningful insights into the relationship between variables.

Intercept (β0): Represents the value of the dependent variable when all independent variables are zero.

Slope(β1): Indicates how much the dependent variable changes when the independent variable increases by one unit.

Regression coefficients (β1, β2, ...): Strength and direction of influence of each feature.

For example, if β1 = 2, then for one-unit increase in X, predicted Y increases by 2 units (all else constant).

5. Algorithm

Step 1: Let X be the input features and (Y) be output values.

Step 2: Assume a linear relationship:

β0 is the intercept (value when all x = 0)

β1, β2, ... are coefficients (weights) for each feature

Step 3: Define the error (residual) for each data point:

Error = Actual value - Predicted value

- ei = yi - (β0 + β1x1i + β2x2i + ...)

Step 4: Calculate total error using Sum of Squared Errors (SSE):

- SSE =(ei)2 =n∑i=1(yi − ŷi)2n∑i=1

Step 5: Find coefficients that minimize SSE using Normal Equation:

- β = (XTX)-1XTy

Where X is the feature matrix and Y is the target vector

Step 6: For new data, predict using:

- ŷ = β0 + β1x1 + β2x2 + ... + βnxn

6. Assumptions of Linear Regression

- Linearity: The relationship between independent and dependent variables is linear

- Independence: Observations are independent of each other.

- Homoscedasticity: Constant variance of residuals across all values of X.

- Normality: Residuals are normally distributed.

7. Applications of Linear Regression

Linear Regression is widely used in many real-world applications, including:

- Sales forecasting

- Price prediction

- Risk assessment

- Demand estimation

- Economic trend analysis

- Healthcare data analysis

Due to its simplicity and interpretability, linear regression is often used as a baseline model in many machine learning problems.

8. Merits of Linear Regression

- Simplicity and interpretability: Linear Regression is easy to understand and implement. Model coefficients have clear statistical meaning, indicating the magnitude and direction of feature influence on the target variable

- Effective for Linearly Related Data: Performs well when the true relationship between features and target is approximately linear and assumptions are reasonably satisfied.

9. Demerits of Linear Regression

- Linearity Assumption: The model cannot capture non-linear relationships unless manual feature transformations (e.g., polynomial terms) are applied.

- Sensitivity to Outliers: Least Squares estimation squares residuals, giving excessive weight to outliers, which can significantly distort the model.

- Multicollinearity issues: High correlation among independent variables leads to unstable coefficient estimates and reduced interpretability in Multiple Linear Regression.